In this retrospective study utilizing the mint Lesion™ software[1], researchers found that routine clinical interpretations frequently resulted in the overdiagnosis of progressive disease compared to formal RECIST 1.1 interpretations. This discrepancy could lead to premature cessation of effective treatments for patients participating in cancer clinical trials and those receiving standard care.

This study aimed to analyze the frequency of discrepant interpretations of progressive disease (PD) between routine clinical and formal RECIST 1.1 interpretations in patients enrolled in solid tumor clinical trials. The researchers aimed to investigate the causes of discordance between these two assessment methods.

The study included 1053 adult patients with extracranial solid tumors participating in clinical trials at the Siteman Cancer Center between January and July 2021. Imaging studies were initially read by clinical staff radiologists (RCIs) without the use of response criteria and subsequently reviewed by core laboratory readers (CLIs), according to RECIST 1.1. Both groups of radiologists had access to clinical and laboratory data and prior imaging study reports.

The clinical radiologists used standard PACS software to review imaging studies and selected prior studies for comparison with the current study. They also chose which lesions to measure if measurements were necessary. On the other hand, the core laboratory readers used the RECIST 1.1 module in the mint Lesion™ software to review imaging studies. The software tracked target and nontarget lesions identified at baseline and new lesions subsequently identified over serial studies. It also facilitated measurements of target lesions often aided by use of lesion segmentation tools, which automatically detected the long and short axis of the lesion. Based on these measurements and assessments, the software calculated the sum of the diameters (SOD) of the target lesions and determined a target lesion response.

The results showed that out of 327 patients with PD assessments on either RCI or CLI, there was agreement in 65% of cases (213 of 327). In 32% of cases (105 of 327), RCIs overdiagnosed PD when CLIs diagnosed stable disease, while in 3% of cases (nine of 327), CLIs diagnosed PD when RCIs diagnosed stable disease.

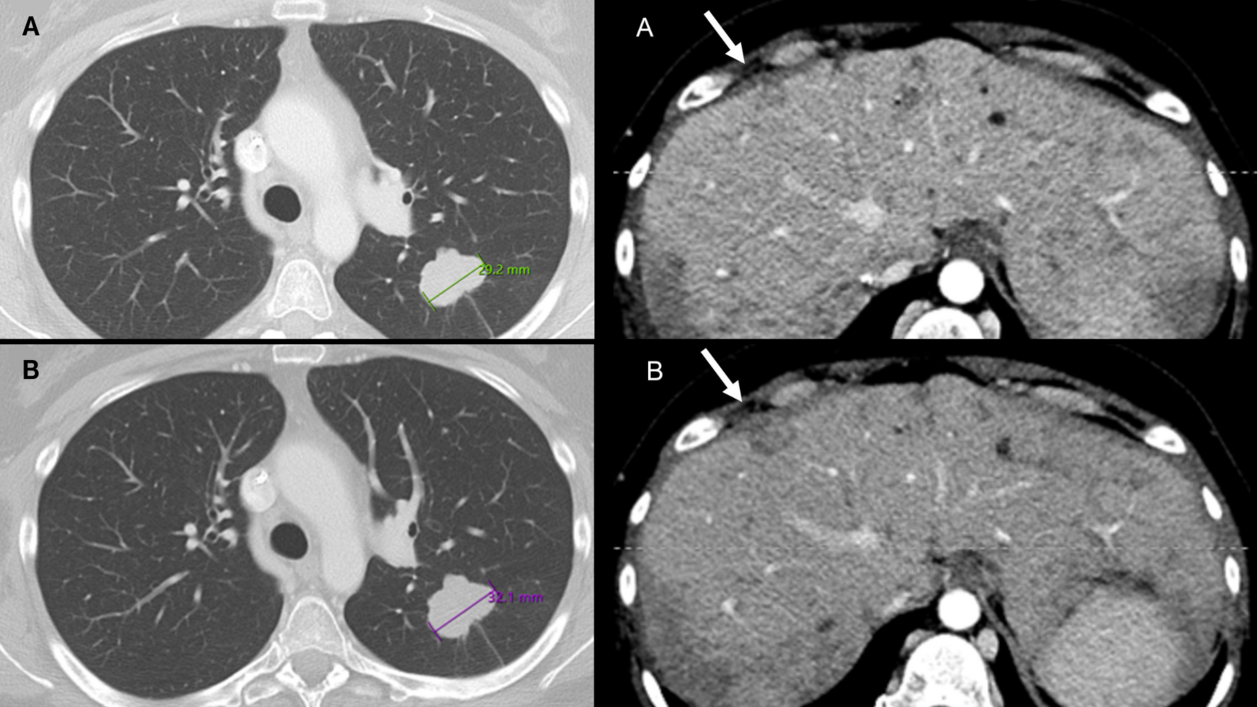

The reasons for discrepant RCIs of PD included:

- erroneous target lesion measurements,

- erroneous diagnosis of nontarget progression, and

- misclassification of new lesions as cancer.

Overall, the study demonstrated that there is considerable variability in assessing PD when comparing RCIs, which is the current standard of care, and CLIs. This variability has the potential to impact patient care and trial outcomes.

"Our data supports the use of a local imaging core laboratory to minimize response assessment errors in clinical trials. To further translate these findings to conventional clinical workflows and improve the accuracy of imaging assessments for patients with cancer moving forward, multiple changes are needed", declared the authors.

The suggested changes include:

- Ensuring Access to Baseline Study Information: Radiologists need to know the date of the baseline study before the start of the current therapy when evaluating subsequent follow-up studies. In conventional electronic medical records, this information may not be easily accessible to the radiologist. Therefore, changes in how studies are ordered and how information is communicated to the interpreting radiologist are necessary to ensure access to this crucial information.

- Formal Training in RECIST and other Response Assessment Criteria: to ensure more standardized and accurate response evaluations, radiology radiologists should receive formal training in the principles and practice of RECIST and other response assessment criteria.

- Incorporating Standardized Language in Radiology Reports: The authors suggest incorporating standardized language into radiology reports that align with RECIST language. Standardized terminology can reduce the potential for misinterpretation by treating physicians and enhance communication about treatment response.

- Utilizing Artificial Intelligence: AI applications have the potential to streamline workflow for busy radiologists by assisting with lesion segmentation and tracking over time, making quantitative response assessments more practical in routine reporting. This can further improve the accuracy and efficiency of response evaluations.

By implementing these changes, the authors believe that imaging assessments for cancer patients can be significantly enhanced, leading to better patient care and more reliable outcomes in clinical trials.

[1] Discrepant Assessments of Progressive Disease in Clinical Trials between Routine Clinical Reads and Formal RECIST 1.1 Interpretations. Marilyn J. Siegel, Joseph E. Ippolito, Richard L. Wahl, and Barry A. Siegel Radiology: Imaging Cancer 2023